Imputation Using Random Sampling and K-Nearest Neighbors

In machine learning, imputation refers to the creation of synthetic data from existing data for the purpose of filling missing data. Missing data are any NaN or null cells in the dataframe. Missing data is to be avoided as it can be problematic for training machine learning models.

The first method of imputation described in this post is designed for categorical data. If the feature you want to impute is continuous, then you’ll want to use the imputation functions built into scikit-learn, as detailed later in this post.

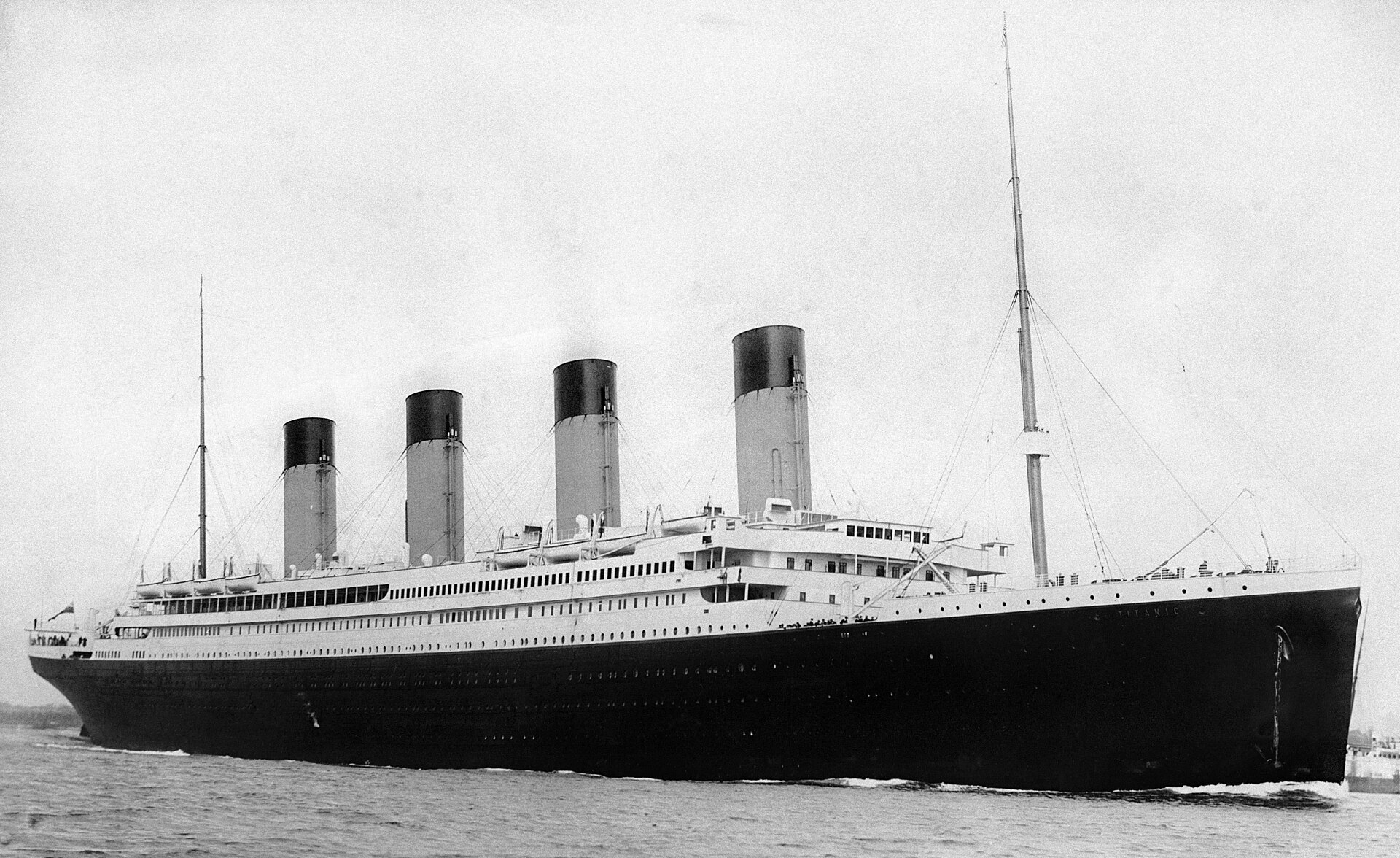

Because it contains categorical features, we’ll be using the Titanic dataset hosted on OpenML to demonstrate.

from sklearn.datasets import fetch_openml

from sklearn.impute import KNNImputer

from sklearn.preprocessing import LabelEncoder

import numpy as np

import pandas as pd

import randomrandom.seed(1)

# Fetch the Titanic dataset

data = fetch_openml(data_id=40945, as_frame=True)

df = data.frame

df.head()| pclass | survived | name | sex | age | sibsp | parch | ticket | fare | cabin | embarked | boat | body | home.dest | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 1 | 1 | Allen, Miss. Elisabeth Walton | female | 29.0000 | 0 | 0 | 24160 | 211.3375 | B5 | S | 2 | NaN | St Louis, MO |

| 1 | 1 | 1 | Allison, Master. Hudson Trevor | male | 0.9167 | 1 | 2 | 113781 | 151.5500 | C22 C26 | S | 11 | NaN | Montreal, PQ / Chesterville, ON |

| 2 | 1 | 0 | Allison, Miss. Helen Loraine | female | 2.0000 | 1 | 2 | 113781 | 151.5500 | C22 C26 | S | NaN | NaN | Montreal, PQ / Chesterville, ON |

| 3 | 1 | 0 | Allison, Mr. Hudson Joshua Creighton | male | 30.0000 | 1 | 2 | 113781 | 151.5500 | C22 C26 | S | NaN | 135.0 | Montreal, PQ / Chesterville, ON |

| 4 | 1 | 0 | Allison, Mrs. Hudson J C (Bessie Waldo Daniels) | female | 25.0000 | 1 | 2 | 113781 | 151.5500 | C22 C26 | S | NaN | NaN | Montreal, PQ / Chesterville, ON |

We shuffle the DataFrame for the purpose of randomly deleting values.

The call to reset_index is necessary because, otherwise, our later slicing of the DataFrame will take all elements up to the nth index instead of subsetting up to the nth row.

# shuffled dataframe

sdf = df.sample(frac=1).reset_index(drop=True)sdf['sex'].value_counts()sex male 843 female 466 Name: count, dtype: int64

Because there are a large number of values in each of the categories of these columns, it will be impossible for the below code to delete all of any category.

NUM_DELETE = 100

sdf.loc[:NUM_DELETE - 1, ['sex']] = None

sdf['sex'].value_counts()sex male 780 female 429 Name: count, dtype: int64

In a previous post, we talked about the Mind-Reading Machine, which uses a Markov Chain. A Markov Chain depends only on the previous state.

This method of imputation does not depend on the previous state, and therefore not capable of being considered a Markov Chain. It is, however, similar to the Mind-Reading Machine in that we will choose at random from an array, thus having the probability of choosing each unique element in proportion to how frequently it shows up in the array.

sexes = sdf[~sdf['sex'].isna()]['sex']

choices = random.choices(sexes.array, k=NUM_DELETE)

sdf.loc[:NUM_DELETE - 1, ['sex']] = choices

sdf.head()| pclass | survived | name | sex | age | sibsp | parch | ticket | fare | cabin | embarked | boat | body | home.dest | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 1 | 0 | Allison, Miss. Helen Loraine | female | 2.0 | 1 | 2 | 113781 | 151.55 | C22 C26 | S | NaN | NaN | Montreal, PQ / Chesterville, ON |

| 1 | 3 | 0 | Vander Planke, Mrs. Julius (Emelia Maria Vande... | male | 31.0 | 1 | 0 | 345763 | 18.00 | NaN | S | NaN | NaN | NaN |

| 2 | 3 | 0 | Wittevrongel, Mr. Camille | male | 36.0 | 0 | 0 | 345771 | 9.50 | NaN | S | NaN | NaN | NaN |

| 3 | 3 | 0 | Davies, Mr. John Samuel | male | 21.0 | 2 | 0 | A/4 48871 | 24.15 | NaN | S | NaN | NaN | West Bromwich, England Pontiac, MI |

| 4 | 2 | 0 | Hart, Mr. Benjamin | male | 43.0 | 1 | 1 | F.C.C. 13529 | 26.25 | NaN | S | NaN | NaN | Ilford, Essex / Winnipeg, MB |

In the original dataset, some of the age values are missing. Fortunately, scikit-learn contains convenient means of imputing data, including numerical data.

First, we encode sex as elements of the set , because

I am working under the assumption that

sexwill be useful for determiningbody.This feature is currently categorical.

The K-Nearest Neighbors imputer (AKA

KNNImputer) requires that the input be numerical.

(Scikit-Learn)

# Encode the categorical labels

encoder = LabelEncoder()

sdf['sex_encoded'] = encoder.fit_transform(sdf['sex'])As mentioned, KNNImputer only wants numeric types. We will therefore provide ourselves with a means of selecting only the numeric columns from the dataframe.

numerics = ['int16', 'int32', 'int64', 'float16', 'float32', 'float64']

selected_columns = sdf.select_dtypes(include=numerics).columns

selected_columnsIndex([‘pclass’, ‘age’, ‘sibsp’, ‘parch’, ‘fare’, ‘body’, ‘sex_encoded’], dtype=‘object’)

A high-level of interpretation of the fit_transform method of KNNImputer is as follows:

- For each row, for each cell, if that cell is missing, do nothing. Otherwise, proceed to the next step.

- Create a value for that cell using the K-Nearest Neighbor algorithm. This uses the other cells in that row and in the neighbors to predict this one.

imp = KNNImputer().set_output(transform='pandas')

transformed = imp.fit_transform(sdf[selected_columns])sdf[selected_columns] = transformed

sdf.head()| pclass | survived | name | sex | age | sibsp | parch | ticket | fare | cabin | embarked | boat | body | home.dest | sex_encoded | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 1.0 | 0 | Allison, Miss. Helen Loraine | female | 2.0 | 1.0 | 2.0 | 113781 | 151.55 | C22 C26 | S | NaN | 167.4 | Montreal, PQ / Chesterville, ON | 0.0 |

| 1 | 3.0 | 0 | Vander Planke, Mrs. Julius (Emelia Maria Vande... | male | 31.0 | 1.0 | 0.0 | 345763 | 18.00 | NaN | S | NaN | 197.0 | NaN | 1.0 |

| 2 | 3.0 | 0 | Wittevrongel, Mr. Camille | male | 36.0 | 0.0 | 0.0 | 345771 | 9.50 | NaN | S | NaN | 156.4 | NaN | 1.0 |

| 3 | 3.0 | 0 | Davies, Mr. John Samuel | male | 21.0 | 2.0 | 0.0 | A/4 48871 | 24.15 | NaN | S | NaN | 171.4 | West Bromwich, England Pontiac, MI | 1.0 |

| 4 | 2.0 | 0 | Hart, Mr. Benjamin | male | 43.0 | 1.0 | 1.0 | F.C.C. 13529 | 26.25 | NaN | S | NaN | 136.4 | Ilford, Essex / Winnipeg, MB | 1.0 |

The below tells us that the only columns that have missing values are the ones that we didn’t intend to impute.

sdf.isna().sum()pclass 0 survived 0 name 0 sex 0 age 0 sibsp 0 parch 0 ticket 0 fare 0 cabin 1014 embarked 2 boat 823 body 0 home.dest 564 sex_encoded 0 dtype: int64

There are scenarios in which deleting rows or columns containing missing values is acceptable. Whether and how we do so depends on a number of factors, including the number of missing values and why those values are missing (if we can know the reason).

An extended discussion of those facets are outside the scope in this post. Instead, I’ll leave the reader with the following takeaways.

To Sum Up

We showed how to replace missing values solely by randomly selecting from the distribution of those existing values.

We showed how to impute missing numerical values using a built-in scikit-learn method.

Future Work

In a future post, we’ll explore a method of training a machine learning model without imputing missing data and compare it with imputation.

Bibliography

“7.4. Imputation of Missing Values.” Scikit-Learn, https://scikit-learn/stable/modules/impute.html. Accessed 13 Feb. 2026.

OpenML. https://www.openml.org/search?type=data&sort=version&status=any&order=asc&exact_name=Titanic&id=40945. Accessed 13 Feb. 2026.